Last December, a photographer in San Francisco discovered her face on a billboard in Seoul advertising a product she’d never heard of. She hadn’t sold the rights to her image, nor had she given permission for its use. The culprit wasn’t traditional copyright infringement but something more insidious: her likeness had been scraped from her public Instagram account, fed into an AI training dataset, and synthesized into commercial content halfway across the world. Her experience is not unique.

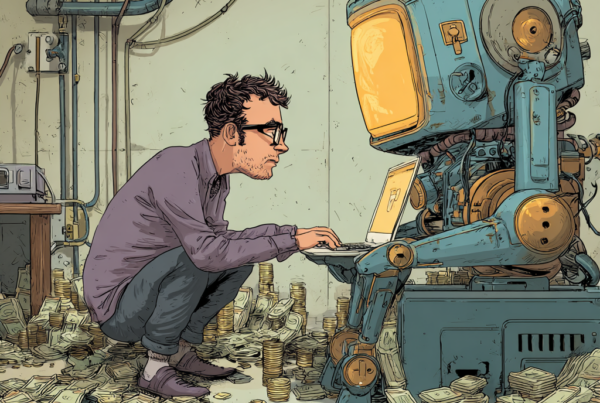

Every digital footprint you leave—every email, social media post, photo upload, or product review—potentially feeds the insatiable appetite of artificial intelligence systems. The uncomfortable truth is that your personal data may be training someone else’s AI without your knowledge or explicit consent. As these systems proliferate across industries and borders, understanding the privacy implications has become essential for everyone navigating the digital landscape.

The Invisible Harvest: How Your Data Becomes AI Fuel

When OpenAI’s ChatGPT burst into public consciousness in late 2022, few users paused to consider what information had shaped its responses. The reality is sobering: massive language models are typically trained on vast troves of text scraped from the internet—including personal blogs, forum posts, and social media conversations. Your impassioned Reddit comment from 2017 may well have helped an AI learn to mimic human communication patterns.

“Most people fundamentally misunderstand the relationship between their data and commercial AI systems,” explains Dr. Emily Wenger, a privacy researcher at the University of Chicago. “They imagine their information sits in some isolated database. In reality, it’s being aggregated, analyzed, and used to teach machines how to generate new content that can be monetized without their participation in those profits.”

The mechanics of this data harvesting are deliberately obscured. Tech companies rely on vague terms of service and privacy policies that few users read and even fewer comprehend. These documents often include broad language granting companies the right to use user-generated content for machine learning purposes. The legal framework remains murky, with regulations like Europe’s GDPR offering some protections that America’s patchwork privacy laws do not.

The Paradox of Personalization and Privacy

The modern internet presents users with a Faustian bargain: surrender personal data for convenience and personalization. We’ve grown accustomed to services that seem to read our minds, recommending products we didn’t know we wanted and content tailored to our interests. This customization, however, comes at a cost that extends beyond targeted advertising.

“AI systems require vast amounts of personal data to function effectively,” notes Dr. Kate Crawford, author of Atlas of AI. “The problem isn’t just that our data is being collected, but that it’s being used to build systems that may ultimately undermine our autonomy or be deployed against our interests.”

Consider the case of facial recognition technology. Images uploaded to social media platforms have trained algorithms now used in surveillance systems worldwide. The photos you shared to connect with friends may now help identify protesters in authoritarian regimes or track consumers through retail environments. This secondary use of personal data—far removed from its original context—represents a profound shift in the privacy landscape that few consented to or anticipated.

Digital Self-Defense: The Basics Everyone Should Know

While perfect privacy may be unattainable in today’s connected world, informed choices can significantly reduce your digital vulnerability. Understanding a few fundamentals can help you navigate the AI-driven landscape more safely.

First, audit your digital footprint regularly. Review privacy settings across platforms and consider using tools that show what information about you is publicly accessible. Services like Google Takeout allow you to download your data, providing insight into what companies have collected. This transparency, while limited, offers a starting point for reclaiming some control.

Second, be strategic about content sharing. Before posting photos, writing reviews, or participating in viral challenges, consider whether you’re comfortable with that content potentially training commercial AI systems. Some platforms now offer options to opt out of AI training, though these settings are often buried deep within privacy menus.

Third, support privacy-focused alternatives. Companies like DuckDuckGo, Signal, and Proton have built business models that don’t rely on data harvesting. Their growing popularity demonstrates a market for privacy-respecting technologies that could reshape industry standards.

Finally, advocate for structural change. Individual actions alone cannot address systemic issues. “We need legal frameworks that recognize data rights as fundamental human rights,” argues Evan Greer, director of digital rights organization Fight for the Future. “This includes the right to know how your data is being used, meaningful consent mechanisms, and the ability to withdraw your information from AI training sets.”

The Future of Digital Dignity

The relationship between personal data and artificial intelligence is not predetermined. As AI systems become more sophisticated and ubiquitous, society faces crucial choices about how to balance innovation with privacy protection. The current trajectory—where data extraction occurs by default and opt-outs are the exception—reflects power imbalances rather than technological necessities.

Emerging models suggest alternatives. Some researchers advocate for data trusts—legal structures that would manage personal information collectively, negotiating with companies on behalf of users. Others propose compensation schemes where AI developers would pay for training data, transforming the current extractive relationship into an economic exchange.

What’s clear is that the status quo is unsustainable. As AI capabilities advance, the stakes of privacy decisions grow higher. Your casual social media posts today might train tomorrow’s AI systems that generate convincing deep fakes or predict personal behaviors with uncomfortable accuracy.

The question is not whether AI will transform society, but whether that transformation will respect human dignity and agency. The answer depends not just on technological development but on the social contracts we establish around data ownership and use. As we navigate this shifting landscape, informed vigilance remains our best defense against becoming unwitting contributors to AI systems that may not align with our values or interests.